The Simplest DSGE Model & the Blanchard-Kahn Method

Macroeconomics (M8674), April 13, 2026

Vivaldo Mendes, ISCTE

vivaldo.mendes@iscte-iul.pt

1. Introduction

What is a DSGE macroeconomic model?

- D for dynamics: the economy evolves over time; economic decisions are made over time

- S for stochastic: the economy is exposed to external shocks that can not be anticipated or forecasted

- G for general: considers all markets that are important for the functioning of a modern economy

- E for equilibrium: private agents and public decision-making institutions try to do the best they can with all available information (optimal decision making)

How relevant are DSGE models?

As Stanley Fischer put it here:

“Let me turn to […] macroeconomic models and their role in assisting the FOMC’s decisionmaking. The Board staff maintains several models; I will focus on the FRB/US model, the best known and most used of the models the Board staff has at its disposal: an estimated, large-scale, general-equilibrium, New Keynesian model.”

in: “I’d rather have Bob Solow than an econometric model, but …”, Speech by Stanley Fischer, Vice Chair of the Board of Governors of the Federal Reserve System, at the Warwick Economics Summit, 11 February 2017.

The simplest possible DSGE model

- The model is linear.

- It has three types of variables:

- a forward-looking variable/block: \(\color{blue}{y_t}\)

- a predetermined variable/block: \(\color{blue}{x_t}\)

- a contemporaneous (or static) variable/block: \(\color{blue}{z_t}\)

- It is an uncoupled model: each variable/block can be solved separately from all other variables/blocks.

- This property means that we can solve the model with pencil and paper.

The three equations

The two first equations below are well known from the previous week, the third is a novelty:

\[ \begin{aligned} x_{t} &= \phi+\rho x_{t-1}+ \varepsilon_{t}^x \quad , & \varepsilon_{t}^x \sim {\cal N}(0, \sigma^{2}) \\[4pt] y_{t} &= \alpha+\beta \mathbb{E}_{t} y_{t+1}+ \theta x_{t} \\[4pt] z_{t} &= \varphi x_{t}+ \mu y_{t} \end{aligned} \]

- \(x_t\) is a backward-looking (or pre-determined) variable

- \(\varepsilon_t^x\) is a random shock

- \(y_t\) is a forward-looking variable

- \(z_t\) is a contemporaneous (or static) variable

- \(\{\phi, \rho, \alpha,\beta, \theta, \varphi, \mu\}\) are parameters

2. Solution: backward-looking block

By pencil-and-paper

Solution to the backward looking block

To avoid explosive behavior on the solution obtained in the previous slide: \[x_{t} = \color{teal} \sum_{i=0}^{n-1} \rho^{i} \phi+ \color{blue}\rho^{n} x_{t-n}+ \color{red}\sum_{i=0}^{n-1} \rho^{i} \varepsilon_{t-i} \color{black}\]

… we have to impose the condition: \(|\rho|<1\).

If \(|\rho|<1\), the solution to this block at the \(n\)th iteration (when \(n \rightarrow \infty\)) is: \[ x_{t} = \color{teal} \sum_{i=0}^{n-1} \rho^{i} \phi + \color{red}\sum_{i=0}^{n-1} \rho^{i} \varepsilon_{t-i} \color{black} = \color{teal}{\underbrace{\frac{\phi}{1-\rho}}_{=\overline{x}}} + \color{red}{\sum_{i=0}^{n-1} \rho^{i} \varepsilon_{t-i}} \tag{1}\]

… where \(\overline{x}\) is the deterministic steady state of \(x_t\)

A numerical example

Consider the following parameter values: \[\alpha = 0\ , \ \theta =1 \ , \ \rho=0.5 \ , \ \beta = 0.75 \ , \ \phi=0\]

In the previous slide, we got the solution: \[ x_t= \color{teal}{\underbrace{\frac{\phi}{1-\rho}}_{=\overline{x}}} + \color{red}{\sum_{i=0}^{n-1} \rho^i \varepsilon_{t-i}} \tag{1'} \]

So, with those parameter values, eq. (1’) can be rewritten as: \[ x_t= \color{teal}{\underbrace{\frac{0}{1-0.5}}_{=\overline{x}}} + \color{red}{\sum_{i=0}^{n-1} 0.5^i \cdot \varepsilon_{t-i}} = \color{teal}{\underbrace{0}_{=\overline{x}}} + \color{red}{\sum_{i=0}^{n-1} 0.5^i \cdot \varepsilon_{t-i}} \tag{2} \]

A numerical example (cont.)

In eq. (2) in the previous slide, we got: \[ x_t= \color{teal}{\underbrace{0}_{=\overline{x}}} + \color{red}{\sum_{i=0}^{n-1} 0.5^i \cdot \varepsilon_{t-i}} \tag{2'} \]

From eq. (2’), we can easily conclude:

- The deterministic steady state is : 0

- The current value of \(x_t\) depends only on the shocks it suffered in the past.

Consider that the process is on its deterministic steady-state \((\overline{x}=0)\), and suffers a positive shock of \(+1\) at period \(t-3\).

What happens to the value of \(x_t\) over time, if there are no more shocks?

A simple solution

Using the original equation \((x_{t}=\phi+\rho x_{t-1} +\varepsilon_t)\), and \(\{\phi=0 \ , \rho=0.5\}\): \[x_{t-3}= 0 + 0.5 x_{t-4} + \varepsilon_{t-3}\]

But \(x_{t-4}=0\), as it was assumed that \(x_{t-4}=\overline{x}=0\). So, we have: \[x_{t-3}= 0 + 0.5 \times 0 + 1 = 1\]

As there are no more shocks in this exercise, for period \(t-2\) we obtain: \[x_{t-2} = 0 + 0.5 \times x_{t-3}+ 0 = 0.5 \times 1 = 0.5\]

Doing the same for \(x_{t-1}\) and \(x_t\), we get: \[x_{t-1}=0.5 \times x_{t-2}+ 0=0.5 \times 0.5 = 0.25\] \[\qquad x_t=0.5 \times x_{t-1}+0 =0.5 \times 0.25=0.125\]

So, the solution will be: \(x_{t-3}=1 \ , \ x_{t-2}=0.5 \ , \ x_{t-1}=0.25 \ , \ x_{t}=0.125\)

A more efficient solution

The method used above is not very efficient. Suppose the shock occurred long ago, for example, \(\varepsilon_{t-50}=1\), and we wanted to compute the value of \(x_{t}\).

According to the previous method, we had to perform 50 operations to get the value of \(x_{t}\).

There is a better way to obtain that value: use directly eq. (2’): \[x_t = 0 + \sum_{i=0}^{n-1} \rho^i \varepsilon_{t-i}\]

So, what is the value of \(x_{t}\), given that a shock occurred 3 periods ago \((\varepsilon_{t-3}=1)\)? \[i=3 \Rightarrow x_{t}=0+0.5^i \varepsilon_{t-i} =0.5^3 \times \varepsilon_{t-3} = 0.5^3 \times 1=0.125\]

A more efficient solution (cont.)

By the same way, what is the value of \(x_{t}\), given that a shock occurred 2 periods ago \((\varepsilon_{t-2}=1)\)? \[i=2 \Rightarrow x_{t}=0+0.5^i \varepsilon_{t-i} =0.5^2 \times \varepsilon_{t-2} = 0.5^2 \times 1=0.25\]

Repeating the same exercise, we can collect the other results.

For example, what is the value of \(x_t\) if the shock occurs in the current period? \[i=0 \Rightarrow x_{t}=0+0.5^i \varepsilon_{t-i} =0.5^0 \times \varepsilon_{t-0} = 0.5^0 \times 1=1\]

So, the solution will be the same: \[x_{t-3}=1 \ , \ x_{t-2}=0.5 \ , \ x_{t-1}=0.25 \ , \ x_{t}=0.125\]

Deterministic part vs random part

As expected, the deterministic part of \(x_t\) remains constant \((\overline{x}=0)\).

The change occurs in the random part of this process \((x^\varepsilon_{t-i})\):

\[x_{t-3} = \underbrace{0}_{\overline{x}}+\underbrace{1}_{x^\varepsilon_{t-3}} \ \ , \ x_{t-2} = \underbrace{0}_{\overline{x}}+\underbrace{0.5}_{x^\varepsilon_{t-2}} \ \ , \ x_{t-1} = \underbrace{0}_{\overline{x}}+\underbrace{0.25}_{x^\varepsilon_{t-1}} \ \ , \ ...\]

3. Solution: forward-looking block

By pencil-and-paper

Solution avoiding explosive behavior

To avoid explosive behavior on the solution \[y_{t} =\color{teal}\sum_{i=0}^{n-1} \beta^{i} \alpha+ \color{blue} \beta^{n} \mathbb{E}_{t} y_{t+n}+ \color{red} \sum_{i=0}^{n-1} \theta \beta^{i} \mathbb{E}_{t} x_{t+i}\color{black}\]

… we have to impose the condition: \(|\beta|<1\).

We get the following solution to this block at the \(\color{blue}n\)-th iteration, as \(n \rightarrow \infty\): \[ y_{t}= \color{teal}{\sum_{i=0}^{n-1} \alpha \beta^{i}} + \color{red}{\sum_{i=0}^{n-1} \theta \beta^{i} \mathbb{E}_{t} x_{t+i}} \tag{3}\]

The solution to eq. (3), can be written as: \[ y_{t}= \color{teal}{\frac{\alpha}{1-\beta}} + \color{red}{\sum_{i=0}^{n-1} \theta \beta^i \mathbb{E}_t x_{t+i}} \tag{4}\]

Unconditional vs conditional expectations

- The solution to eq.(4) depends on the type of information we may have about the observations of \(x_t\) over time.

- That is: what is the value of \(\mathbb{E}_t x_{t+i}\) in eq. (4)?

- It depends on whether we compute the unconditional mean of \(x_{t+i}\) , or its conditional mean.

- The unconditional mean is just the deterministic value of its steady state: \(\overline{x}\).

- The conditional mean is computed on the basis that we know the value of \(x_t\).

- Next we show how to compute these two expected values, but we will concentrate on the conditional case.

\(y_t\) solution: unconditional expectations (skip this)

The expected-unconditional value of \(\mathbb{E}_t x_{t+i}\) is the deterministic steady-state: \[\mathbb{E}_t x_{t+i}=\overline{x}=\frac{\phi}{ 1-\rho} \tag{5}\]

Therefore, the solution to \(y_t\) is obtained by inserting eq. (5) into (4): \[ y_{t}= \color{teal}{\frac{\alpha}{1-\beta}} + \color{red}{\sum_{i=0}^{n-1} \theta \beta^i \mathbb{E}_t x_{t+i}}\]

\[ y_{t}= \color{teal}{\frac{\alpha}{1-\beta}} + \color{red}{\sum_{i=0}^{n-1} \theta \beta^i \frac{\phi}{ 1-\rho}} = \color{teal}{\frac{\alpha}{1-\beta}} + \color{red}{\frac{\theta \frac{ \phi}{1-\rho}}{ 1-\beta}} \]

\[y_t= \color{teal}{\frac{\alpha}{1-\beta}}+\color{red}{\frac{\theta \phi}{( 1-\beta)(1-\rho)}} \tag{6}\]

\(y_t\) solution: unconditional expectations (skip this)

In the previous slide we obtained that the solution to \(y_t\) is given by: \[y_t= \color{teal}{\frac{\alpha}{1-\beta}}+\color{red}{\frac{\theta \phi}{( 1-\beta)(1-\rho)}} \tag{6a}\]

Considering the information we have about the parameters: \[\alpha = 0\ , \ \theta =1 \ , \ \rho=0.5 \ , \ \beta = 0.75 \ , \ \phi=0\]

So, we get: \[y_{t} = \color{teal}{\frac{\alpha}{1-\beta}} + \color{red}{\frac{\theta \phi}{ (1-\beta)(1-\rho)}} = \color{teal}{\frac{0}{1-0.75}} + \color{red}{\frac{1 \times 0}{ (1-0.75)(1- 0.5)}} =0 \tag{6b}\]

Therefore, with unconditional expectations, the value of \(y_t\) will be: \[y_t=\overline{y}=0.\]

\(y_t\) solution with conditional expectations

As the expected-conditional value of \(\mathbb{E}_t x_{t+i}\) is given by: \[\mathbb{E}_t x_{t+i}= \overline{x} + \rho^{i} x^\varepsilon _t = \frac{\phi}{1-\rho}+\rho^{i} x^\varepsilon _t \tag{7}\]

And as we have the information that \[\alpha = 0\ , \ \theta =1 \ , \ \rho=0.5 \ , \ \beta = 0.75 \ , \ \phi=0\]

Then,

\[\mathbb{E}_t x_{t+i}= \frac{0}{1-0.5}+ 0.5^i x^\varepsilon_t= 0+0.5^{i} x^\varepsilon_t \tag{7a}\]Therefore, the solution to \(y_t\) is obtained by inserting eq. (7a) into (4): \[ y_{t}= \color{teal}{ \frac{\alpha}{1-\beta} } + \color{red}{\sum_{i=0}^{n-1} \theta \beta^{i} \mathbb{E}_t x_{t+i}} = \color{teal}{ \frac{0}{1-0.75} } + \color{red}{\sum_{i=0}^{n-1} 1 \times 0.75^{i} \times 0.5^{i} x^\varepsilon_t} \tag{7b}\]

\(y_t\) solution with conditional expectations (cont.)

From eq.(7b) in the previous slide, we got: \[ y_{t}= \color{teal}{ \underbrace{0}_{=\overline{y}} } + \color{red}{\sum_{i=0}^{n-1} 1 \times 0.75^{i} \times 0.5^{i} x^\varepsilon_t}\]

This is a geometric sum, with a solution: \[y_{t}= \underbrace{0}_{=\overline{y}} +\frac{1}{1-0.75 \times 0.5} x^\varepsilon_t = 0 + 1.6x^\varepsilon_t \tag{8}\]

Therefore, it is easy yo see that: \[y_t= \overline{y}+ 1.6x^\varepsilon_t \tag{9}\]

If \(x_t\) moves away from its steady-state \((x^\varepsilon _t \neq 0 )\), \(y_t\) will change because: \[\partial y_t / \partial x^\varepsilon_t = 1.6 \]

\(y_t\) solution with conditional expectations (cont.)

As from a previous slide we know that \(x\) suffered a shock in period \(t-3\): \[x_{t-3}=1 \ , \ x_{t-2}=0.5 \ , \ x_{t-1}=0.25 \ , \ x_{t}=0.125\]

It is very simple to calculate the values 0f \(y_t\) by using eq. (9): \[y_{t-3}=0+1.6 x_{t-3}=1.6 \times 1 = 1.6\] \[y_{t-2}=0+1.6 x_{t-2}=1.6 \times 0.5 = 0.8\] \[y_{t-1}=0+1.6 x_{t-1}=1.6 \times 0.25 = 0.4\] \[y_{t} =0+1.6 x_{t} \ \ \ =1.6 \times 0.125 = 0.2\]

So, \(y\) moved away from its steady state \((\overline{y}=0)\) due to the impact that \(x\) exerts upon \(y\).

And \(x\) moved away from its steady state \((\overline{x}=0)\) due to the shock it suffered in period \(t-3\).

4. Solution: static block

By pencil-and-paper

## Solution: no iterations needed

The static block is given by the equation: \[z_{t}= \varphi x_{t}+ \mu y_{t}\]

From the previous slide, we know that: \[x_{t-3} = 1 \ , x_{t-2} = 0.5 \ , x_{t-1} = 0.25 \ , x_{t} = 0.125\]

From eq. (3), we know that: \(y_t=0+1.6x_t\). So: \[y_{t-3} = 1.6 \ , y_{t-2} = 0.8 \ , y_{t-1} = 0.4 \ , y_{t} = 0.2\]

Once we know the values of \(x\) and \(y\), it is immediate to calculate \(z\).

Assuming that \(\varphi = 2.5\) and \(\mu=-2\), we get: \[z_{t-3} = 2.5x_{t-3}-2y_{t-3}=-0.7 \ \ , \ z_{t-2} =...\]

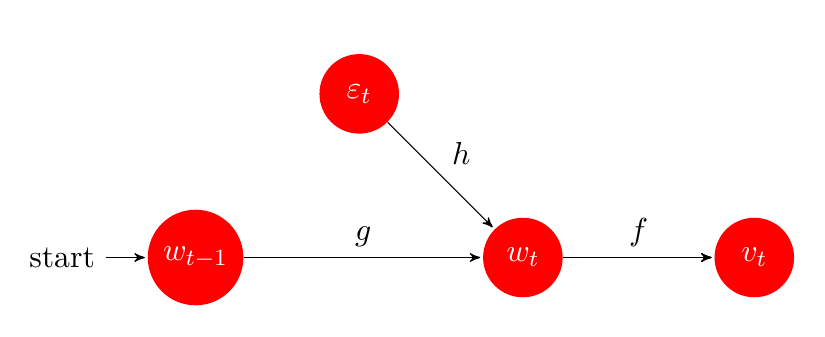

An image of our simple model

\(~\)

5. The Blanchard-Kahn conditions

Blanchard, O. and Kahn, C. M. (1980). The solution of linear difference models under rational expectations. Econometrica, 48(5), 1305-1311.

More complicated models

Most models in modern macroeconomics can not be solved by pencil and paper. * They may be non-linear. * Their blocks be coupled in contrast to the case above. * Blanchard-Kahn (1980) developed a technique that allows us to solve any linear model, no matter how intricate its blocks might be. * This technique is based on the Jordan decomposition of square matrices. * In this class, we do not expect students to replicate the proof; but students should understand its logic. * It is crucial to understand what the Blanchard-Kahn stability conditions mean.

The strategy of solving a DSGE model

- \(w_{t}\) is a predetermined variable (or set of variables)

- \(\varepsilon_{t}\) is a random shock (or a sequence of random shocks)

- \(v_{t}\) is a forward-looking variable (or a set of variables)

- \(g\) and \(h\) solve the predetermined variable (or block)

- \(f\) solves the forward-looking variable (or block)

\(~\)

The model in state-space representation

Write the model in state space form \[ \mathcal{A}\left[\begin{array}{l} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]=\mathcal{B}\left[\begin{array}{l} w_{t} \\ v_{t} \end{array}\right]+\mathcal{C}\left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ \varepsilon_{t+1}^{v} \end{array}\right]+\mathcal{D} \tag{9} \]

\(\mathcal{A}, \mathcal{B}, \mathcal{C}\) are square matrices representing the parametric structure of the model

\(w_{t}, v_{t}\) are vectors with the endogenous variables, and \(\varepsilon_{t}\) is a vector of exogenous random shocks. \(\mathbb{E}_{t}\) is the usual conditional expectations operator. \(\mathcal{D}\) is a vector with constants, and for simplicity, we drop it from the model.

Multiplying both sides of (9) by \(\mathcal{A}^{-1}\), leads to: \[ \left[\begin{array}{c} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]=\underbrace{\mathcal{A}^{-1} \mathcal{B}}_{\mathcal{R}}\left[\begin{array}{c} w_{t} \\ v_{t} \end{array}\right]+\underbrace{\mathcal{A}^{-1} \mathcal{C}}_{\mathcal{U}}\left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ \varepsilon_{t+1}^{v} \end{array}\right] \tag{10} \]

The Jordan Decomposition

Suppose we have a square matrix \(\mathcal{R}\)

The Jordan decomposition of \(\mathcal{R}\) is given by: \[ \mathcal{R}=P \Lambda P^{-1} \]

\(P\) contains as columns the eigenvectors of \(\mathcal{R}\)

\(\Lambda\) is a diagonal matrix containing the eigenvalues of \(\mathcal{R}\) in the main diagonal.

\(P^{-1}\) is the inverse of \(P\)

Apply the Jordan Decomposition

Our system was given by (10): \[ \left[\begin{array}{c} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]= \mathcal{R}\left[\begin{array}{c} w_{t} \\ v_{t} \end{array}\right]+\mathcal{U}\left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ \varepsilon_{t+1}^{v} \end{array}\right] \tag{10'} \]

Apply the decomposition \(\mathcal{R}=P \Lambda P^{-1}\) to (10): \[ \left[\begin{array}{c} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]=P \Lambda P^{-1}\left[\begin{array}{c} w_{t} \\ v_{t} \end{array}\right]+\mathcal{U} \left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ \varepsilon_{t+1}^{v} \end{array}\right]\tag{11} \]

Multiply both sides by \(P^{-1}\): \[ P^{-1}\left[\begin{array}{c} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]=\Lambda P^{-1}\left[\begin{array}{c} w_{t} \\ v_{t} \end{array}\right]+\underbrace{P^{-1} \mathcal{U}}_{\mathcal{M}} \cdot\left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ \varepsilon_{t+1}^{v} \end{array}\right] \tag{12} \]

Matrices partition

Let us assume that there are no shocks affecting the forward-looking block: \[\varepsilon_{t}^v=0 \ , \ \forall t\]

Next, we apply a partition to the matrices: \(P^{-1}\), \(\Lambda\), \(\mathcal{M}\): \[\underbrace{\left[\begin{array}{cc} P_{11} & P_{12} \\ P_{21} & P_{22} \end{array}\right]\left[\begin{array}{c} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]}_{\mathbb{E}_t\left[\begin{array}{c} \widetilde{w}_{t+1} \\ \tilde{v}_{t+1} \end{array}\right]}=\left[\begin{array}{cc} \Lambda_{1} & 0 \\ 0 & \Lambda_{2} \end{array}\right] \underbrace{\left[\begin{array}{cc} P_{11} & P_{12} \\ P_{21} & P_{22} \end{array}\right]\left[\begin{array}{c} w_{t} \\ v_{t} \end{array}\right]}_{\left[\begin{array}{c} \widetilde{w}_{t} \\ \tilde{v}_{t} \end{array}\right]}+\underbrace{\left[\begin{array}{ll} \mathcal{M}_{11} & \mathcal{M}_{12} \\ \mathcal{M}_{21} & \mathcal{M}_{22} \end{array}\right]}_{M} \left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ 0 \end{array}\right]\]

Our transformed model looks much easier now:

\[\left[\begin{array}{c} \widetilde{w}_{t+1} \\ \mathbb{E}_{t} \tilde{v}_{t+1} \end{array}\right]=\left[\begin{array}{cc} \Lambda_{1} & 0 \\ 0 & \Lambda_{2} \end{array}\right]\left[\begin{array}{c} \widetilde{w}_{t} \\ \tilde{v}_{t} \end{array}\right]+ \left[\begin{array}{c} M_{11} \\ M_{21} \end{array}\right] \cdot \varepsilon_{t+1}^w\]

The solution to the model

- Using these partitions, the solution will be given by (see detailed demonstration in Appendix A): \[ \color{blue}{\begin{gathered} v_{t}^{*}=\underbrace{\left[-P_{22}^{-1} P_{21}\right]}_{f} \cdot w_{t}^{*} \\ \\ w_{t+1}^{*}=\underbrace{\left[G^{-1} \Lambda_{1} G\right]}_{g} \cdot w_{t}^{*}+\underbrace{\left[G^{-1} M_{11}\right]}_{h} \cdot \varepsilon_{t+1} \\ \\ \qquad \qquad\text{\color{black}{with}} \qquad \qquad G \equiv P_{11}-P_{12}\left(P_{22}\right)^{-1} P_{21} \qquad \qquad\qquad \qquad \qquad \end{gathered}} \]

Partition of matrices \(P^{-1}\), \(\Lambda\), \(\mathcal{M}\)

When solving these models, the most demanding task is to apply the correct partition to these three matrices.

Suppose a model with 1 backward-looking variable, one static, and the third is a forward-looking variable (as the simple model above).

The partitions should be as follows:

Partition of matrices \(P^{-1}\), \(\Lambda\), \(\mathcal{M}\)

2 forward-looking, 2 non-forward-looking variables

\(\quad\)

The Blanchard-Kahn stability conditions

- Suppose we have a model with 5 variables:

- 2 forward-looking

- 2 backward-looking (or predetermined)

- 1 contemporaneous (static)

- To secure a unique and stable solution, the matrix \(\cal{R}\) should provide:

- 2 eigenvalues greater than 1, \(|\lambda_1,\lambda_2| > 1\), (forward-looking block is stable)

- 2 eigenvalues less than 1, \(|\lambda_3,\lambda_4| < 1\) (backward-looking block is stable)

- 1 eigenvalue is 0, \(\lambda_5=0\), (the static variable has no dynamics of its own)

- If these conditions are violated, one of the blocks shows explosive behavior, which violates what we observe in reality.

6. Back to the “simplest model”

Solving it with the Blanchard-Kahn method … and a computer

Prepare the model for matrix form

The original model: \[ \begin{aligned} x_{t} &= \phi+\rho x_{t-1}+\varepsilon_{t}^x \\ z_{t} &= \varphi x_{t}+\mu y_{t} \\ y_{t} &= \alpha+\beta \mathbb{E} y_{t+1}+\theta x_{t} \end{aligned} \]

To write the model in matrix form: all variables expressed at \(t+1\) on the system’s left side, those at \(t\) on the right side, and constants at the end:

\[ \begin{aligned} \color{blue} x_{t+1} &= \rho x_{t}+ \color{magenta} \varepsilon_{t+1}^x + \color{red} \phi \qquad \qquad\\ \color{blue} z_{t+1} - \varphi x_{t+1} - \mu \mathbb{E} y_{t+1} &= 0\\ \color{blue} \beta \mathbb{E} y_{t+1} &= -\theta x_t + y_t - \color{red} \alpha \end{aligned} \]

Static variables should be placed on the left hand side for simplicity reasons.

The model in matrix form

left hand-side: endogenous variables at \(t+1\)

right hand-side: endogenous variables at \(t\) , shocks , and constants \[ \begin{aligned} \color{blue} x_{t+1} &= \rho x_{t}+ \color{magenta} \varepsilon_{t+1}^x + \color{red} \phi \qquad \qquad\\ \color{blue} z_{t+1} - \varphi x_{t+1} - \mu \mathbb{E} y_{t+1} &= 0\\ \color{blue} \beta \mathbb{E} y_{t+1} &= -\theta x_t + y_t - \color{red} \alpha \end{aligned}\]

Detailed specification of the model: \[ \begin{aligned} \color{blue} 1 x_{t+1}+0 z_{t+1}+0 \mathbb{E}_{t} y_{t+1}&=\; \; \, \rho x_{t}+0 z_{t}+0 y_{t} + \color{magenta} 1 \varepsilon_{t+1}^{x} + 0 \varepsilon_{t+1}^{z}+ 0 \varepsilon_{t+1}^{y} \color{red} +\phi\\ \color{blue} -\varphi x_{t+1}+1 z_{t+1}- \mu \mathbb{E}_{t} y_{t+1} &=\ \ \ 0x_{t}+0 z_{t}+0 y_{t}+ \color{magenta} 0 \varepsilon_{t+1}^{x}+ 0 \varepsilon_{t+1}^{z} + 0 \varepsilon_{t+1}^{y}+ \color{red} 0 \\ \color{blue} 0 x_{t+1}+0 z_{t+1}+\beta \mathbb{E}_{t} y_{t+1}&=-\theta x_{t}+0 z_{t}+1 y_{t}+ \color{magenta} 0 \varepsilon_{t+1}^{x}+ 0 \varepsilon_{t+1}^{z} + 0 \varepsilon_{t+1}^{y} \color{red} -\alpha \end{aligned} \]

The model in matrix form (cont.)

Detailed specification of the model: \[\begin{aligned} \color{blue} 1 x_{t+1}+0 z_{t+1}+0 \mathbb{E}_{t} y_{t+1}&=\; \; \, \rho x_{t}+0 z_{t}+0 y_{t} + \color{magenta} 1 \varepsilon_{t+1}^{x} + 0 \varepsilon_{t+1}^{z}+ 0 \varepsilon_{t+1}^{y} \color{red} +\phi\\ \color{blue} -\varphi x_{t+1}+1 z_{t+1}- \mu \mathbb{E}_{t} y_{t+1} &=\ \ \ 0x_{t}+0 z_{t}+0 y_{t}+ \color{magenta} 0 \varepsilon_{t+1}^{x}+ 0 \varepsilon_{t+1}^{z} + 0 \varepsilon_{t+1}^{y}+ \color{red} 0 \\ \color{blue} 0 x_{t+1}+0 z_{t+1}+\beta \mathbb{E}_{t} y_{t+1}&=-\theta x_{t}+0 z_{t}+1 y_{t}+ \color{magenta} 0 \varepsilon_{t+1}^{x}+ 0 \varepsilon_{t+1}^{z} + 0 \varepsilon_{t+1}^{y} \color{red} -\alpha \end{aligned}\]

The model in state space representation: \[{\color{blue} \underbrace{\left[\begin{array}{ccc} 1 & 0 & 0 \\ -\varphi & 1 & -\mu \\ 0 & 0 & \beta \end{array}\right]}_{\cal{A}}\left[\begin{array}{l} x_{t+1} \\ z_{t+1} \\ \mathbb{E}_{t} y_{t+1} \end{array}\right]} = \underbrace{\left[\begin{array}{ccc} \rho & 0 & 0 \\ 0 & 0 & 0 \\ -\theta & 0 & 1 \end{array}\right]}_{\cal{B}} \left[\begin{array}{l} x_{t} \\ z_{t} \\ y_{t} \end{array}\right] + {\color{magenta} \underbrace{\left[\begin{array}{lll} 1 & 0 & 0 \\ 0 & 0 & 0 \\ 0 & 0 & 0 \end{array}\right]}_{\cal{C}} \left[\begin{array}{c} \varepsilon_{t+1}^{x} \\ \varepsilon_{t+1}^{z} \\ \varepsilon_{t+1}^{y} \end{array}\right]} + \underbrace{\left[\begin{array}{c} \color{red} \phi \\ \color{red} 0 \\ \color{red} - \alpha \end{array}\right]}_{\cal{D}} \]

The state space representation passed into Julia

A = zeros(3,3)

B = zeros(3,3)

C = zeros(3,3)

A[1,1] = 1.0

A[2,1] = -ϕ

A[2,2] = 1.0

A[2,3] = -μ

A[3,3] = β

B[1,1] = ρ

B[3,1] = -θ

B[3,3] = 1.0

C[1,1] = 1.0

D =[φ ; 0.0 ; -α]Using the notebook “Simple_Model.jl”

- This nootebook follows step-by-step the BK approach:

- Write the model in state-space form.

- Check the BK stability conditions

- Perform the matrices’ partitions

- Simulate the model’s response to an isolated shock upon \(x_t\) with a magnitude of \(+1\).

- And we also implement:

- A simulation of the model’s response to systematic white-noise shocks on \(x_t\).

- A computation of the: (i) autocorrelation function for each variable in this model, (ii) cross-correlation function, (iii) standard deviation.

Appendix A

Proof of the The Blanchard-Kahn method (not required)

The model in state-space representation

Write the model in state space form: \[ \mathcal{A}\left[\begin{array}{l} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]=\mathcal{B}\left[\begin{array}{l} w_{t} \\ v_{t} \end{array}\right]+\mathcal{C}\left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ \varepsilon_{t+1}^{v} \end{array}\right]+\mathcal{D} \tag{A1} \]

\(\mathcal{A}, \mathcal{B}, \mathcal{C}\) are square matrices representing the parametric structure of the model

\(w_{t}, v_{t}\) are vectors with the endogenous variables, and \(\varepsilon_{t}\) is a vector of exogenous random shocks. \(\mathbb{E}_{t}\) is the usual conditional expectations operator. \(\mathcal{D}\) is a vector with constants, and for simplicity, we drop it from the model.

Multiplying both sides of (4) by \(\mathcal{A}^{-1}\), leads to: \[ \left[\begin{array}{c} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]=\underbrace{\mathcal{A}^{-1} \mathcal{B}}_{\mathcal{R}}\left[\begin{array}{c} w_{t} \\ v_{t} \end{array}\right]+\underbrace{\mathcal{A}^{-1} \mathcal{C}}_{\mathcal{U}}\left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ \varepsilon_{t+1}^{v} \end{array}\right] \tag{A2} \]

Apply the Jordan Decomposition

The Jordan decomposition is given by: \[ \mathcal{R}=P \Lambda P^{-1} \]

\(P\) contains as columns the eigenvectors of \(\mathcal{R} ; \Lambda\) is a diagonal matrix containing the eigenvalues of \(\mathcal{R}\) in the main diagonal.

Apply the decomposition to (A2): \[ \left[\begin{array}{c} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]=P \Lambda P^{-1}\left[\begin{array}{c} w_{t} \\ v_{t} \end{array}\right]+\mathcal{U} \cdot\left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ \varepsilon_{t+1}^{v} \end{array}\right] \]

Multiply both sides by \(P^{-1}\): \[ P^{-1}\left[\begin{array}{c} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]=\Lambda P^{-1}\left[\begin{array}{c} w_{t} \\ v_{t} \end{array}\right]+\underbrace{P^{-1} \mathcal{U}}_{\mathcal{M}} \cdot\left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ \varepsilon_{t+1}^{v} \end{array}\right] \]

Matrices partition

Let us assume that there are no shocks affecting the forward-looking block: \[\varepsilon_{t}=0 \ , \ \forall t\]

Next, we apply a partition to the matrices: \(P^{-1}\), \(\Lambda\), \(\mathcal{M}\): \[\underbrace{\left[\begin{array}{cc} P_{11} & P_{12} \\ P_{21} & P_{22} \end{array}\right]\left[\begin{array}{c} w_{t+1} \\ \mathbb{E}_{t} v_{t+1} \end{array}\right]}_{\mathbb{E}_t\left[\begin{array}{c} \widetilde{w}_{t+1} \\ \tilde{v}_{t+1} \end{array}\right]}=\left[\begin{array}{cc} \Lambda_{1} & 0 \\ 0 & \Lambda_{2} \end{array}\right] \underbrace{\left[\begin{array}{cc} P_{11} & P_{12} \\ P_{21} & P_{22} \end{array}\right]\left[\begin{array}{c} w_{t} \\ v_{t} \end{array}\right]}_{\left[\begin{array}{c} \widetilde{w}_{t} \\ \tilde{v}_{t} \end{array}\right]}+\underbrace{\left[\begin{array}{ll} \mathcal{M}_{11} & \mathcal{M}_{12} \\ \mathcal{M}_{21} & \mathcal{M}_{22} \end{array}\right]}_{M} \left[\begin{array}{c} \varepsilon_{t+1}^{w} \\ 0 \end{array}\right]\]

Our transformed model looks much easier now: \[ \left[\begin{array}{c} \widetilde{w}_{t+1} \\ \mathbb{E}_{t} \tilde{v}_{t+1} \end{array}\right]=\left[\begin{array}{cc} \Lambda_{1} & 0 \\ 0 & \Lambda_{2} \end{array}\right]\left[\begin{array}{c} \widetilde{w}_{t} \\ \tilde{v}_{t} \end{array}\right]+ \left[\begin{array}{c} M_{11} \\ M_{21} \end{array}\right] \cdot \varepsilon_{t+1}^w \]

System Written as Two Decoupled Blocks

Since \(\Lambda\) is diagonal, the transformed system is uncoupled!

We can solve each block separately.

Transformed model written as a set of decoupled equations:

\[\begin{aligned} {\color{blue}\widetilde{w}_{t+1}} & {\color{blue}=\Lambda_1 \cdot \widetilde{w}_t + M_{11} \cdot \varepsilon_{t+1}^w} \qquad \qquad \text{(Predetermined block)}\\[4pt] \qquad \qquad \qquad \qquad {\color{blue}\mathbb{E}_t \tilde{v}_{t+1}} & {\color{blue}=\Lambda_2 \cdot \widetilde{v}_t + M_{21} \cdot \varepsilon_{t+1}^w} \qquad \qquad \text{(Forward-looking block)} \end{aligned}\]

- We can now apply our well known strategy:

Solve the predetermined transformed block: \[\widetilde{w}_{t}^{*}\]

Solve the forward-looking transformed block: \[\tilde{v}_{t}^{*}\]

Solving the forward-looking block

- The forward-looking block is given by:

\[\mathbb{E}_t \tilde{v}_{t+1}=\Lambda_2 \tilde{v}_t+M_{21} \varepsilon_{t+1}^w\]

- Solve for \(\tilde{v}_t\):

\[\tilde{v}_t=\Lambda_2^{-1} \mathbb{E}_t \tilde{v}_{t+1}-\Lambda_2^{-1} M_{21} \mathbb{E}_t \varepsilon_{t+1}^w\]

- Iterate forward \(n\) times and take into account that \(|\Lambda_2|>1\)

\[\tilde{v}_t=\underbrace{\left(\Lambda_2^{-1}\right)^n \mathbb{E}_t \tilde{v}_{t+n}}_{= \ 0 \ , \ n \rightarrow \infty}-\sum_{i=1}^n\left(\Lambda_2^{-1}\right)^n M_{21} \underbrace{\mathbb{E}_t \varepsilon_{t+n}^w}_{= \ 0}\]

- Then, the only stable solution will be

\[\tilde{v}_t^*=0 \ , \ \forall t \tag{A3}\]

Solving the forward-looking block (cont.)

- Then, as from (A3) we know that:

\[\tilde{v}_t^*=0 \ , \ \forall t \tag{A3'}\]

- And from the partition of \(P^{-1}\) and \(\Lambda\), we know that

\[\tilde{v}_t^*=P_{21} \cdot w_t^*+P_{22} \cdot v_t^* \tag{A4}\]

- Equalizing (A3’) and (A4), we get:

\[v_t^*=\underbrace{\left[-P_{22}^{-1} P_{21}\right]}_f \cdot w_t^* \tag{A5}\]

- We are back to our old solution: the forward-looking block only depends on predetermined one.

Solving the predetermined block

Let us iterate backward the predetermined block: \[ \widetilde{w}_{t+1}=\Lambda_1 \widetilde{w}_t+M_{11} \varepsilon_{t+1}^w \]

Write this block in terms of \(\widetilde{w}_t\):

\[\widetilde{w}_t=\Lambda_1^{-1} \widetilde{w}_{t+1}-\Lambda_1^{-1} M_{11} \varepsilon_{t+1}^w\]

- Iterate forward \(n\) times and take into account that \(|\Lambda_1|<1\)

\[\widetilde{w}_t=\underbrace{\left(\Lambda_1^{-1}\right)^n \widetilde{w}_{t+n}}_{= \ 0 \ , \ n \rightarrow \infty}-\underbrace{\sum_{i=1}^n\left(\Lambda_1^{-1}\right)^i M_{11} \varepsilon_{t+i}^w}_{= \ 0 \ , \ n \rightarrow \infty}\]

- So, we get the following result:

\[\widetilde{w}_t=0 \ , \ \forall t\]

Solving the predetermined block (cont.)

As the transformed predetermined block is stable, we can find a solution to the original predetermined block

From he partition of \(P^{-1}\) we know that: \[ \widetilde{w}_{t}^{*}=P_{11} \cdot w_{t}^{*}+P_{12} \cdot v_{t}^{*} \tag{A6}\]

Now, inserting eq. (A5) into (A6), we can obtain: \[ \widetilde{w}_{t}^{*}=\underbrace{\left[P_{11}-P_{12} P_{22}^{-1} P_{21}\right]}_{G} \cdot w_{t}^{*} \tag{A7} \]

So, from (A7), we know that: \[\begin{aligned} \widetilde{w}_t^*&=G \cdot w_t^*\\ \widetilde{w}_{t+1}^*&=G \cdot w_{t+1}^* \end{aligned}\tag{A8}\]

Solving the the predetermined block (cont.)

But, as from eq. (Predetermined block) we have: \[ \widetilde{w}_{t+1}=\Lambda_{1} \widetilde{w}_{t}+M_{11} \varepsilon_{t+1}^{w} \tag{A9} \]

By mere substitution of (A8) into (A9), we derive our final result: \[\begin{aligned} & G \cdot w_{t+1}^*=\Lambda_1 (G \cdot w_t^*)+M_{11} \varepsilon_{t+1}^w \\[6pt] & w_{t+1}^*=\underbrace{\left[G^{-1} \Lambda_1 G\right]}_g w_t^*+\underbrace{\left[G^{-1} M_{11}\right]}_h \varepsilon_{t+1}^w \end{aligned}\]

That is, we are back to our old strategy: predetermined variables determined by their past values and by the shocks.

Summarizing

- Write down your model in state space form

- Apply the Jordan decomposition

- Decouple the system into two blocks

- Make sure the eigenvalues satisfy the Blanchard-Kahn conditions

- End up with the two fundamental results:

\[ \color{blue}{\begin{gathered} v_{t}^{*}=\underbrace{\left[-P_{22}^{-1} P_{21}\right]}_{f} \cdot w_{t}^{*} \\ w_{t+1}^{*}=\underbrace{\left[G^{-1} \Lambda_{1} G\right]}_{g} \cdot w_{t}^{*}+\underbrace{\left[G^{-1} M_{11}\right]}_{h} \cdot \varepsilon_{t+1} \\ \qquad \qquad\text{\color{black}{with}} \qquad \qquad G \equiv P_{11}-P_{12}\left(P_{22}\right)^{-1} P_{21} \qquad \qquad\qquad \qquad \qquad \end{gathered}} \]

Readings

- We deal with the Blanchard-Kahn method to solve a DSGE model here.

- Students are not required to replicate the demonstration of this method; however, they are expected to understand the logic behind this method and be able to simulate a model by using a computer and this method.

- So no required reading is really necessary. However, for the curious, we leave two references below:

- DeJong, D. N., & Dave, C. (2011). Structural Macroeconometrics (2nd ed.). Princeton University Press. (Chapter 2: Approximating and solving DSGE models, pages 17-22)

- Azzimonti, M., Krusell, P., McKay, A., & Mukoyama, T. (2025). Macroeconomics: A comprehensive textbook for first-year Ph.D. courses in macroeconomics. Available here. See, section “10.6 Solving a dynamic model with linearization”, pages 276-283.